Over half of SaaS companies have already reduced headcount due to AI. That stat from the 2025 High Alpha SaaS Benchmarks Report — drawn from 800+ founders and operators globally — isn’t a warning about the future. It’s a description of the present.

For early-stage founders, this raises an urgent and practical question: “How do I know if my team is structured for Generation AI?”

The benchmarks give you a clear answer, broken down by ARR stage. This post walks through what the data actually says — and what it means for how you hire, organize, and build.

The New Baseline: ARR Per Employee by Stage

The single most useful efficiency metric for early-stage SaaS companies is ARR per employee. It tells you whether your revenue is growing faster than your headcount — and whether you’re building leverage into your business or just adding bodies.

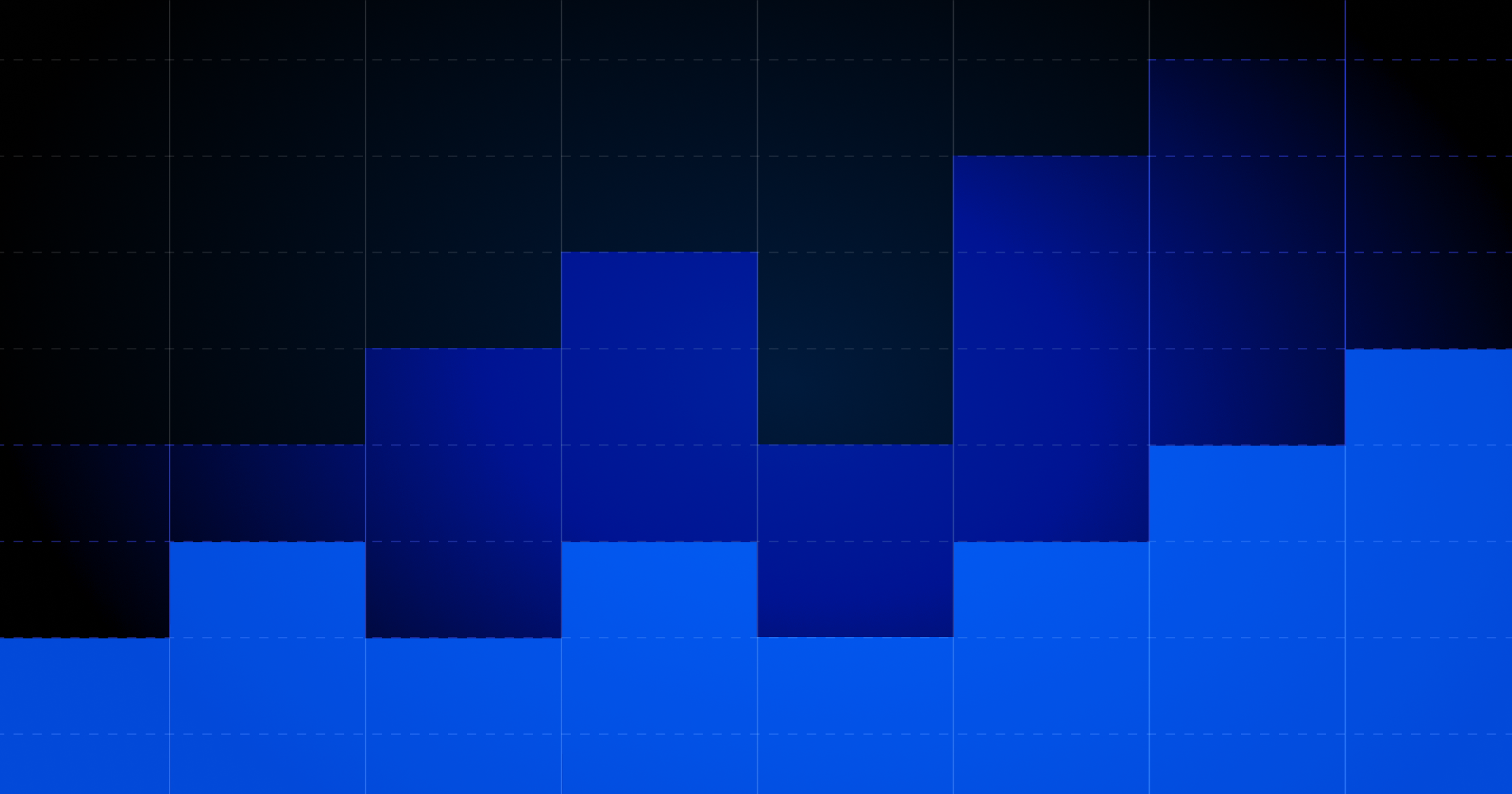

Here’s where the benchmarks land in 2025:

Good and Great ARR Per Employee by ARR Range

The jump from $5–20M to $20–50M is the most telling: median ARR per employee rises 61%. This is where AI-augmented team structures start to compound. The top-quartile companies above $50M are generating nearly $400K per employee — on par with the median public SaaS company.

Founder Takeaway

If you're at a $1-5M ARR and generating less than $100K per employee, your team structure deserves scrutiny. You don't need to reduce headcount — but you should understand which roles are generating leverage and which are adding cost without compounding returns.

Moving from AI Pilots to Playbooks

The data on internal AI adoption reveals a meaningful gap between early-stage and later-stage companies — and it’s not the direction you might expect.

Smaller companies (under $1M ARR) are more deeply embedding AI into their daily workflows: 43% report full workflow integration. Larger companies, by contrast, are more likely to still be piloting or in selective team adoption mode.

Culture of Internal AI Adoption Among SaaS Companies

The interpretation from the report: smaller companies were built with AI productivity baked in from the start. Larger organizations are trying to convert existing processes.

If you’re building at the early stage, this is an advantage — but only if you operationalize it. The report also flags that most companies are still measuring AI impact qualitatively:

- 35% rely on informal team feedback

- Only 13% monitor specific KPIs tied to AI impact

- Only 9% use analytics dashboards

How Teams Measure Adoption of AI Internally

This matters because qualitative measurement limits your ability to make hiring and structure decisions based on real productivity data. The founders building durable, efficient companies will be the ones who close this loop.

Founder Takeaway

Pick two or three workflows — sales outreach, support triage, code review, content production — and build concrete KPIs around AI's impact on each. Measurement is what separates experimentation from operational advantage.

Where Headcount Reductions Are Actually Happening

Of the companies that confirmed AI-driven headcount reductions, here’s where those reductions concentrated:

Percent of Companies Reducing Headcount Due to AI by Functional Area

Engineering is the standout — by a wide margin. AI coding tools are compressing what previously required multiple engineers. CS & support and marketing follow, reflecting how AI-native tools have matured fastest in those areas.

But here’s what the headline misses: overall departmental mix across companies has stayed remarkably stable. Teams are shrinking, but the proportional breakdown — roughly 40% engineering/product/design, 25% GTM, 25% CS/support, 10% G&A — hasn’t shifted dramatically. What’s changed is that each function is operating with fewer people generating more output.

Percent of Total Employees by Department

The Strategic Implication

This isn't about wholesale restructuring. It's about raising the bar for what each hire needs to produce — and being deliberate about which functions can be augmented versus which genuinely require human judgment and relationship.

The ARR-Stage Checklist: Where Advantages Live

Companies under $1M ARR have advantages that larger companies don't — and vice versa. When we looked the data, here's what stuck out to us about each cohort — and what founders can use to their advantage.

Under $1M ARR

In the earliest stages, you're much more likely to report that AI is deeply integrated into day-to-day work vs. larger companies, who are largely still experimenting or piloting AI solutions. The ability to quickly adopt new technologies is a unique advantage for early-stage software companies — especially those founded in Generation AI.

As you scale and grow, maintain this culture of AI adoption when growing your team.

$1–5M ARR

As you start to gain momentum, companies operating in the $1-5M ARR range need to make sure they're keeping a close eye on ARR per FTE. If you scale too quickly without true product market fit, you'll almost certainly find that the investment in headcount doesn't directly translate to ARR.

Additionally, these teams reported the highest "widespread adoption" or AI internally. Start to think about how to effectively measure AI impact, and focus in areas you see the biggest gains.

$5–20M ARR

Consider how you're measuring AI impact — is it qualitative? Quantitative? The data shows that larger companies adopt AI as a slower rate than early stage companies. Unlocking the transformative power of AI across your organization can improve everything from ARR per employee to CAC and beyond. Measuring AI pilots and/or experimentation to understand what's working and what's not is the first step.

The Bottom Line

Our benchmarks don’t tell a story about AI replacing teams. They tell a story about leverage — about the compounding advantage that comes when each person in your organization is producing materially more output because AI has absorbed the repetitive, low-judgment work.

As teams continue to operate more efficiently, the question for early-stage founders isn’t whether to build with AI, but whether you’re building the team structures, measurement frameworks, and culture of leverage that let AI compound over time.

The benchmarks show you what that looks like in practice. Now it’s a matter of execution.